Welcome back everyone 👋 and big thanks to all new subscribers - thanks for joining along for the ride!

This is the weekly Gorilla Newsletter - we have a look at everything noteworthy from the past week in generative art, creative coding, tech and AI. As well as a sprinkle of my own endeavors.

Enjoy - Gorilla Sun 🌸

All the Generative Things

fxhash 2.0 v2

After the necessary postponement, fxhash launched just a couple of days ago on the 14th and is now fully live with its Ethereum integration! Although there have been a couple of hiccups, things have gone relatively smoothly for the now multi-chain platform:

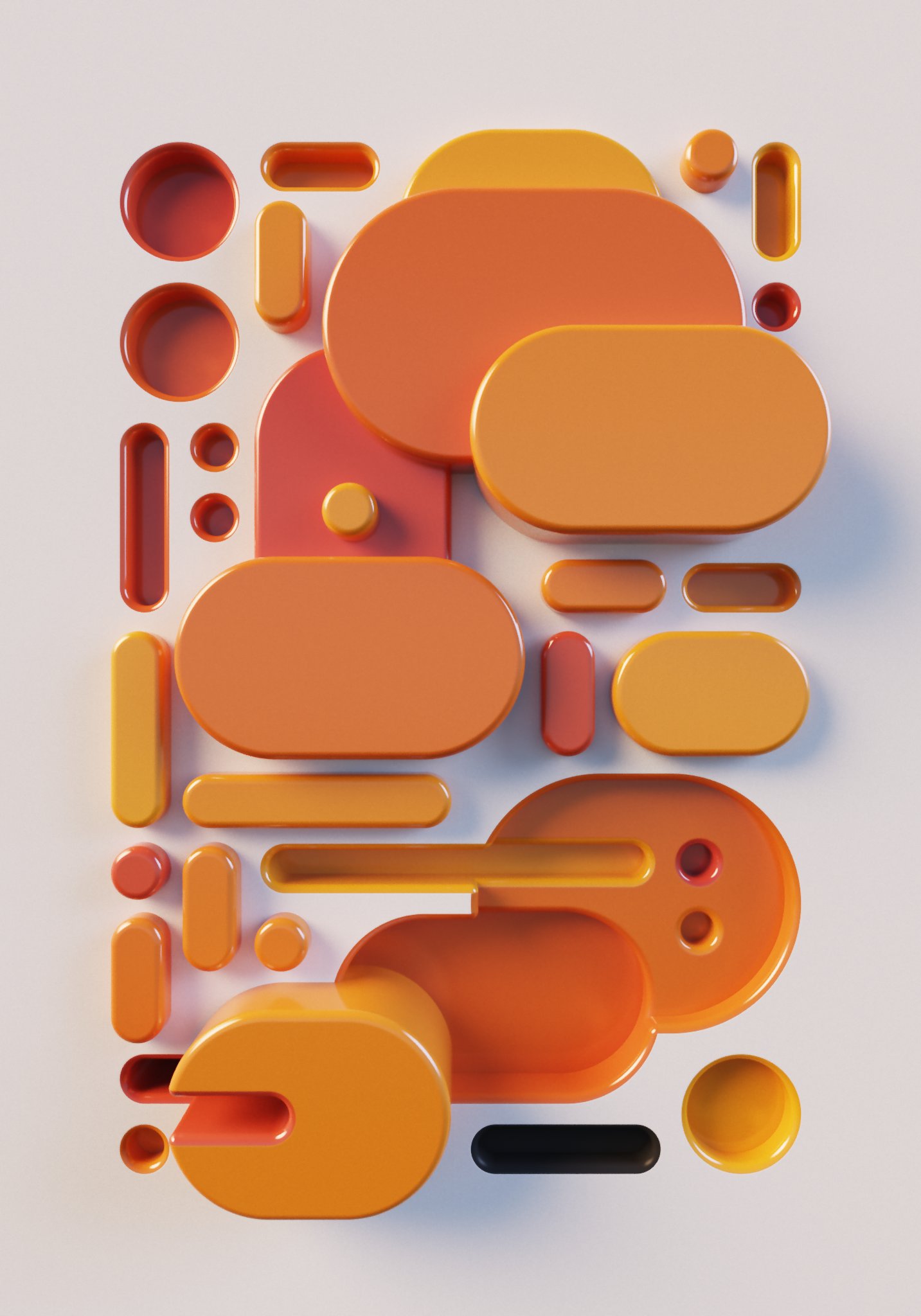

One smash hit from the first couple of days that I'd like to highlight for its virtuosity, is Piter Pasma's Tender curated drop, titled Blokkendoos. You might know him as the brilliant mind behind the Universal Ray Hatcher from a couple of months ago, and he's back with another impressive feat of generative art.

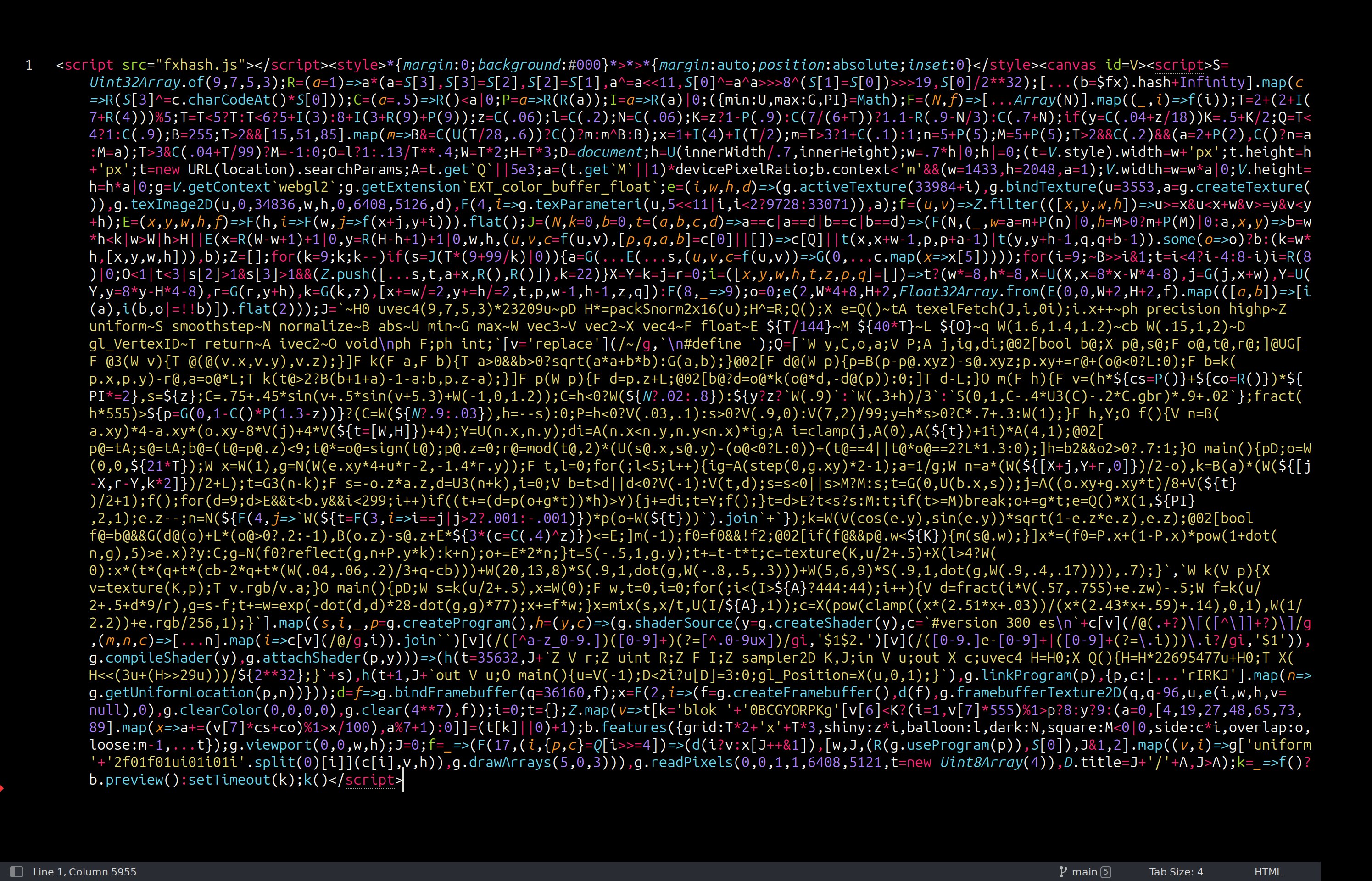

By making use of a "global illumination Monte Carlo path tracer" his project generates stunning renders of glossy shapes, and does so in only 5954 characters of code, without using any external libraries - it's basically the modern day equivalent to sorcery. In one of his tweets, Piter shares the entire size optimized code as well as an example output:

The Code for Blokkendoos - 5954 characters render glossy graphics in the browser without any libraries | Link to Tweet

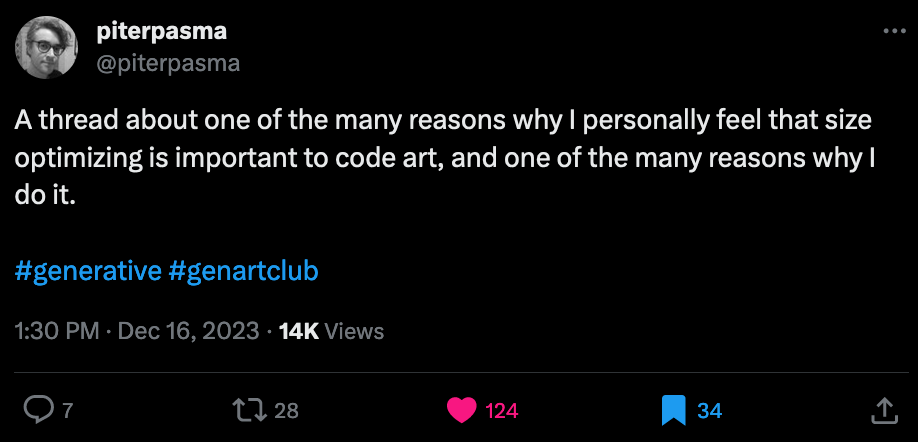

Moreover he shares a thread in which he addresses the importance of size optimizing code, and why it isn't simply about reducing the length of a piece of code but actually has a deeper purpose to it:

Hey also brings up the interesting notion of Kolmogorov complexity, which measures the complexity of objects by the length of the shortest computer program that produces the object as output:

Processing Foundation Impact Report 2023

In light of Ben Fry's resignation a couple of weeks ago, with controversial claims that put the foundation in a precarious position, we now get clarity on their entire activities throughout 2023 with the recently released impact report:

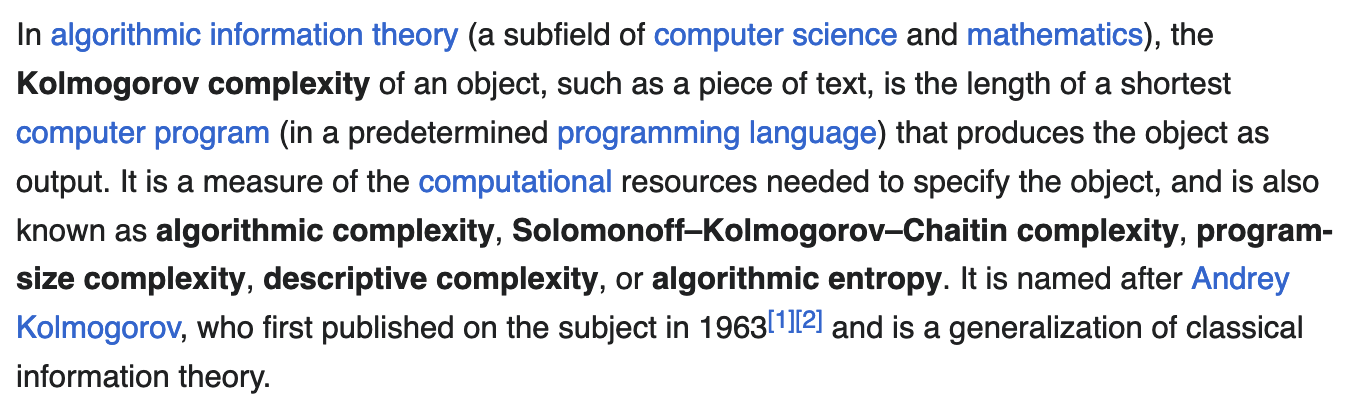

Processing's remit as a foundation has grown well beyond the initial vision of the software sketchbook, and now holds the responsibility for fostering a large, inclusive, and diverse community. In 2021 the foundation received a large spike in donations from their 20th anniversary fundraiser - where several NFT platforms made it possible for code artists to donate directly with their work to the foundation. Which led to the foundation receiving a funding sum magnitudes larger than any grant money previously received:

With the influx of funding from our community, it was imperative to grow our core team to meet the needs of our growing community with deep focus and ensure thoughtful, equitable stewardship of foundation funds.

Naturally that prompted a big change in infrastructure and management to ensure the long term financial health of the foundation - the report states that the foundation can now safely continue operations for the next 12-13 years with their current budgeting while fairly compensating the core full-time members, while also providing a breakdown of spendings in that regard.

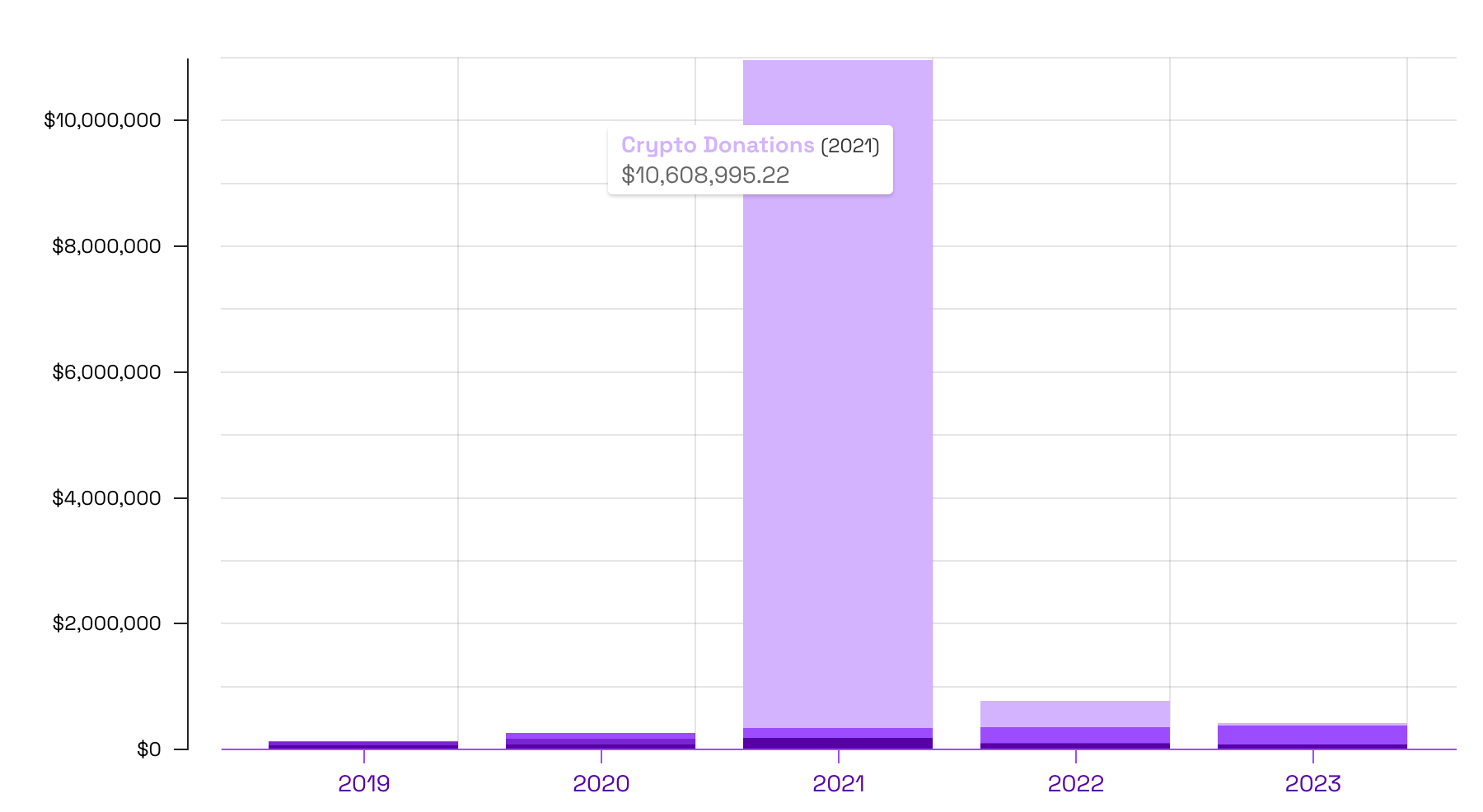

The report also shows some impressive numbers when it comes to the development of P5, which I believe to be much popular nowadays than the original Processing - especially with the commercialization of generative art via blockchain technology that demands for browser based code art - so there's definitely been progress on the development side of things:

I applaud the transparent and comprehensive report detailing the foundations current endeavors. I believe that it is well on track, and that we'll continue to see the community grow in coming years.

Tyler Hobbs on Algorithmic Aesthetics

Monk gives us a look into the current mind of Tyler Hobbs with his newest interview for Le Random:

Having coined the term "Long-form Generative Art" with his seminal essay The Rise of Long-form Generative Art, Hobbs has unarguably become a household name in the genart space. Regarding that Hobbs has always been very generous with information, it is interesting to catch up and see how his views have evolved in the meantime.

One topic that I found particularly insightful was Monk's inquiry about Hobbs' criteria for evaluating generative art - where he provides an interesting perspective:

It needs to be worth it to see all of the images in the output set. Ideally, the work should be compelling enough for you to want to look at every single image in the output. If it's not, then you may have generated too many of them.

And I believe that it's a shared struggle by generative artists; trying to strike a balance between variety and harmony, such that each individual output is interesting by itself but still ties into an overarching narrative - Hobbs' comment however goes beyond this, such that it's not only important that the code creates outputs that are interesting on their own, but also make you want to see the rest of the collection. Making long-form projects that are successful in that regard is incredibly difficult.

Although I've made quite a few longform generative artworks at this point, looking back this has been one of the hardest aspects to achieve. Making long-form generative art is the opposite of a formulaic process, each project is wildly different, hence I'm not certain how one would exactly harness such a global quality to the set of outputs with making any compromises. Hobbs elaborates that it's an ideal:

Again, that's an ideal, as my own work probably falls short of that.

I also really appreciate that Monk tackled some of the recent ongoing debates around digital vs. traditional aesthetics, seeing that Hobbs has experimented extensively with the simulation of painterly aesthetics by means of computer code himself. To address the topic, he uses a term that I believe to be quite fitting and that captures a broad range of the problematic: richness.

A physical canvas is already inherently rich with texture, and trying to draw a rectangle by hand will result in something that is naturally rich with imperfections as Hobbs explains. The same thing can not be said about the pristine shapes that the machine draws onto a digital canvas, which I believe is why we see so many artists recur to the recreation of those aesthetics to make their work more interesting.

I've had this experience in particular with my attempts to add 'grain' to some of my generative artworks. It's simply a method that adds random perturbations to the pixels of the canvas, which is a quick and easy way to add texture to a work, when in reality it only makes the artwork appear richer, but often doesn't add much substance. So it's definitely a mistake to use texture as a cheap trick to make things appear richer than they actually are.

I do think it is a mistake to focus on replicating specific analog media as an end. I don't think that digital work needs to pretend to be something it's not.

Again, a very insightful article by Monk, continuing to provide us with standout generative art content.

Pen and Plottery

Plotter Postcard Exchange 2023

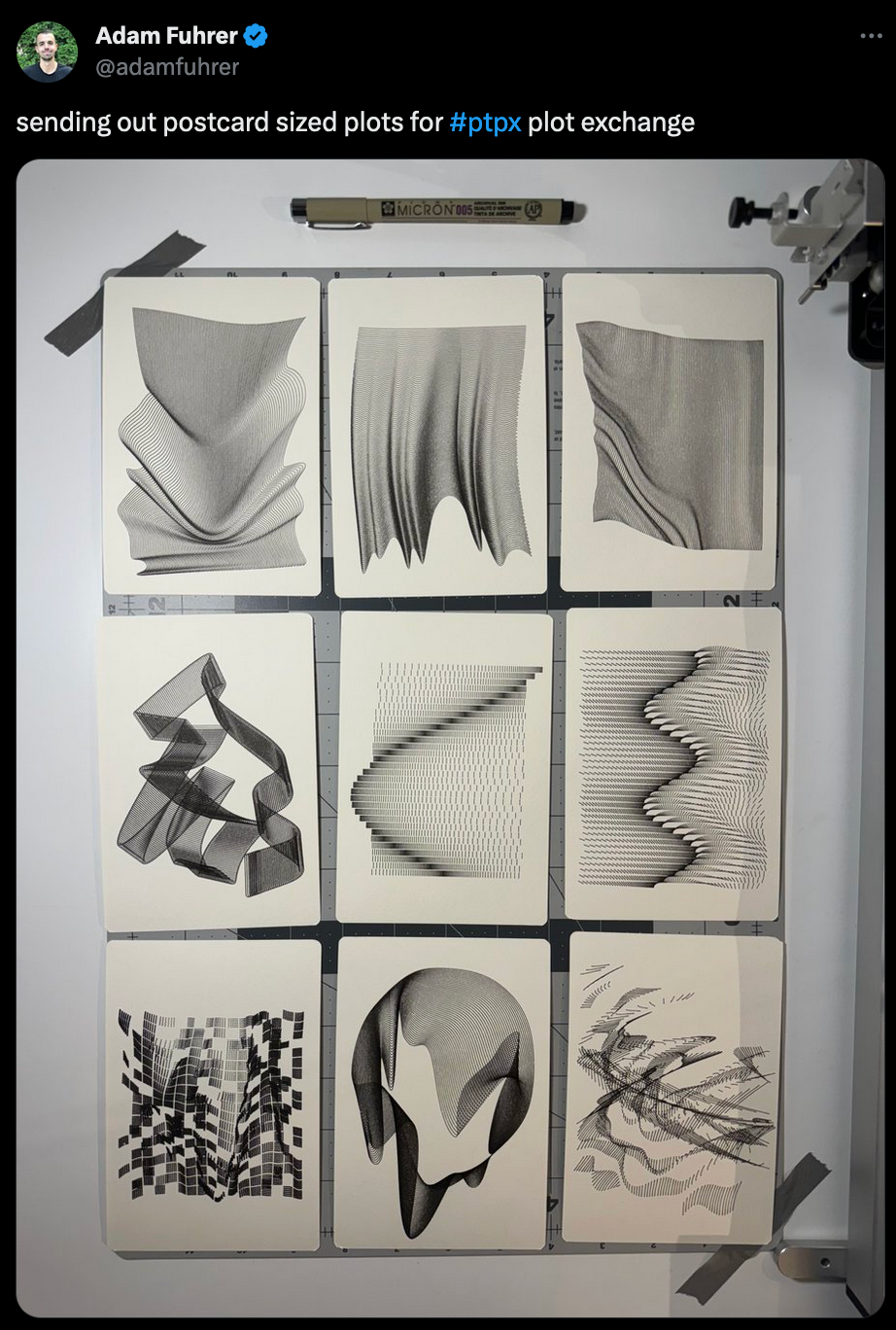

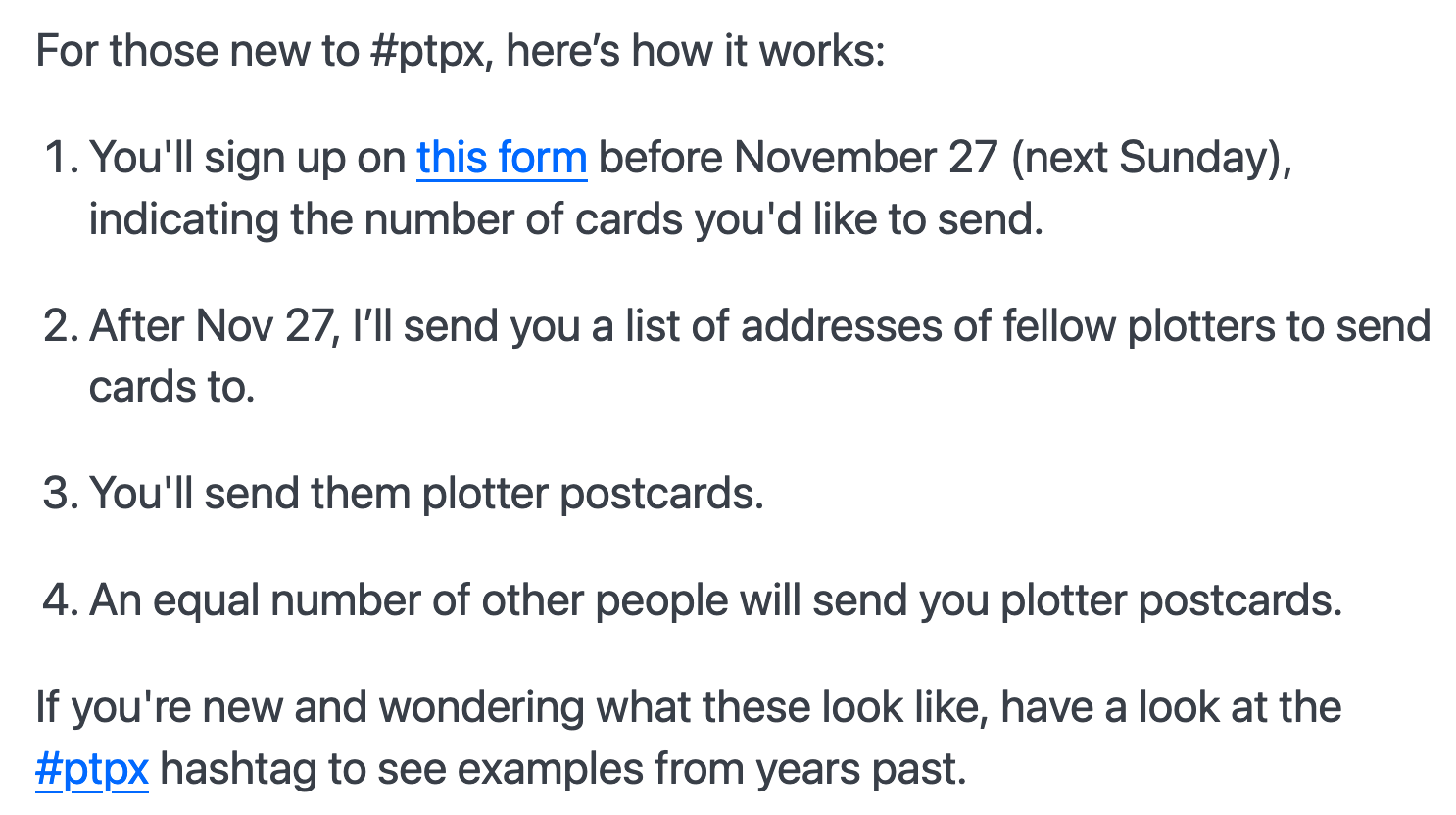

Over the weekend I suddenly came across a bunch of tweets tagged with #ptpx. Upon closer inspection, it turns out to be an annual event organized by Paul Butler, in which a number of plotter enthusiasts all across the world come together for an exchange of plotter artworks in postcard format:

Although sign-up for this year is already closed here's a brief break-down of how it works - via a google form you indicate how many postcards you'd like to send out and will then be matched accordingly with others, whom you'll exchange postcards with - a breakdown of this from one of the previous signup pages:

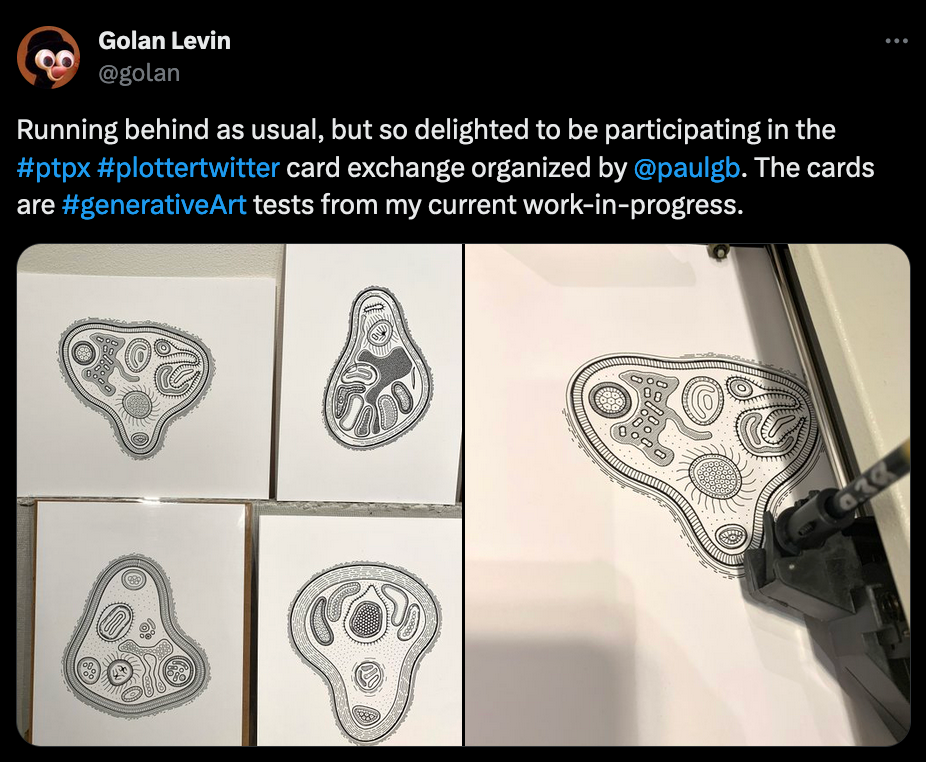

Here's two really cool examples from past years:

Convinced that you should invest in a plotter now?

Arbitrarily Deterministic feat. Licia He

Ken Consumer is back with another episode of Arbitrarily Deterministic, and this time he's joined by the prolific Licia He, where they gush about everything plotter related and whilst getting an insightful look into Licia's process:

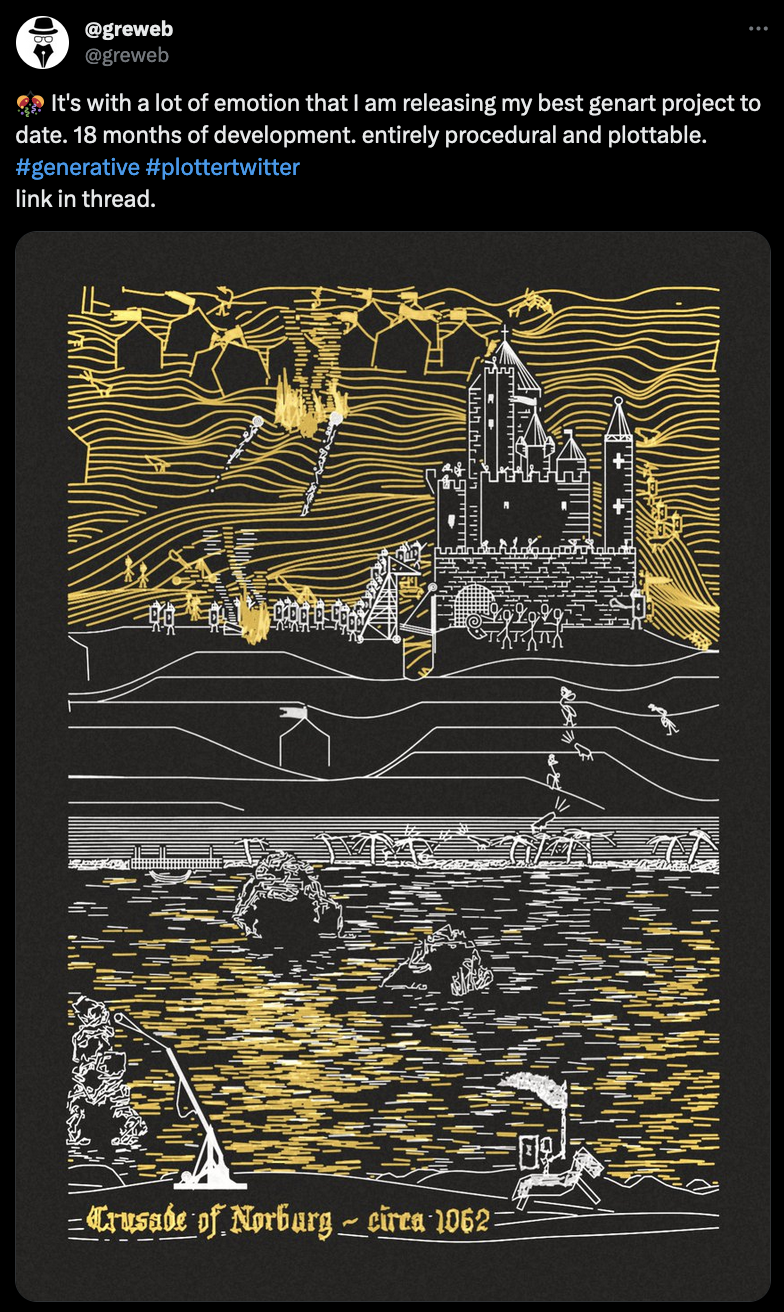

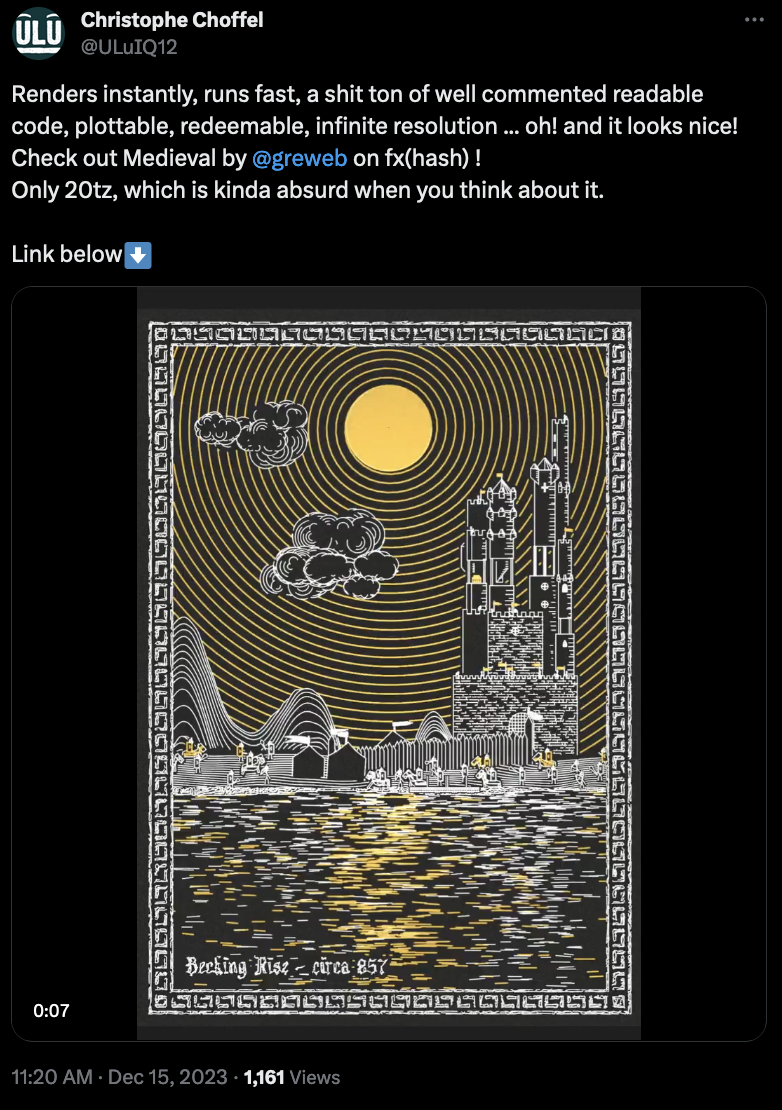

Greweb's Medieval Era

Greweb's another prolific plotter wizard that recently released a project he's been working on for quite a while - generative depictions of medieval sceneries that can you can plot by yourself or be redeemed for physical plots straight from greweb:

What's more, is that greweb also occasionally streams his dev process on Twitch, albeit sometimes in french it makes for a great educative resource if you're looking to get into plotting - what a lot might not know is that greweb actually has aphantasia which makes things doubly impressive:

Weekly Web Finds

Examples of Great URLs

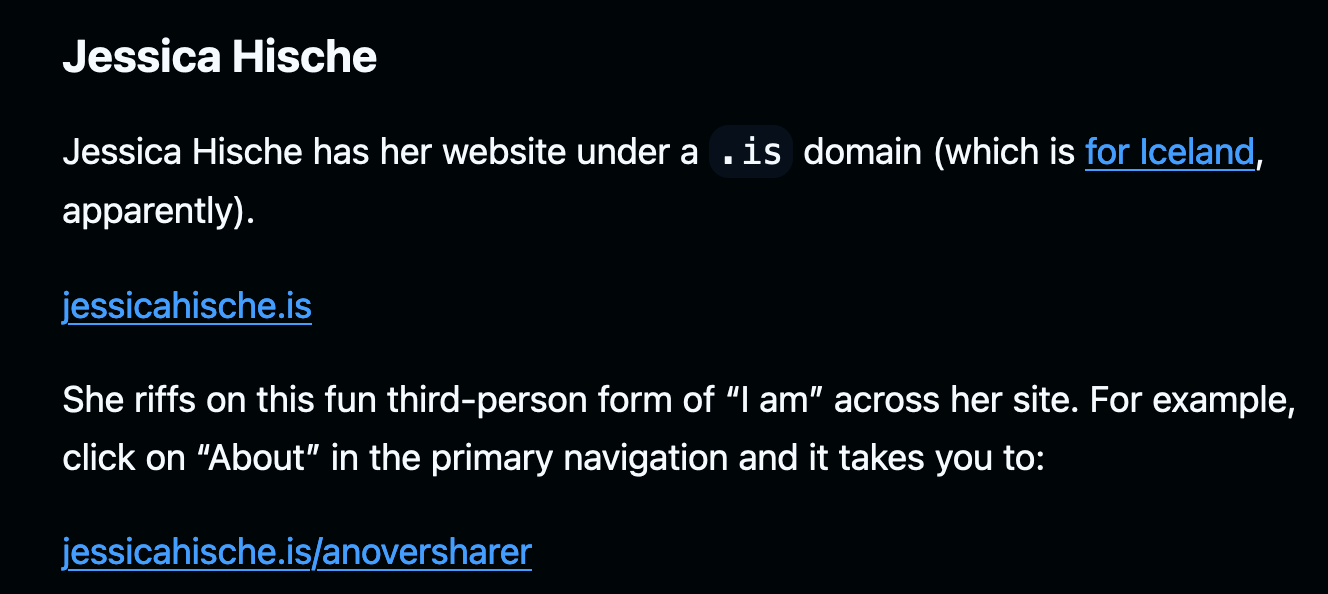

Did you know that URL design is a thing? Yes it is. If you've ever tried to do a little SEO optimization, you'll know how important URLs are, not just for linking back towards your website, but also for the purpose of conveying information to users and machines alike. Jim Nielsen lists a number of websites that have well crafted URL structures or have a creative take on it:

I'm kind of glad that I ran into this resource before I'm going to tackle the redesign of the blog in 2024 (design and structure) - with the amount of pages I have right now I want to introduce a better link structure as well, and I think it's gonna be a smart thing to sit down and plan it out before doing the overhaul.

For instance, if you're viewing this page in the browser you'll notice that the URL has a prefix /blog/ - which is the same for every piece of content on the blog, save the main pages. Instead, I would like this prefix to be different for different pieces of content, such as /newsletter/ for newsletter pages, /tutorial/ for tutorials, /interview/ for interviews, etc... this helps categorize the content, further clarifies what kind of content the page contains, and will help aggregate things in individual sections.

You don't need Javascript for that

Killian Valkhof introduces us to the rule of least power in web design - which simply means that you should try to use the least powerful language suitable for a task: if you can do something with HTML + CSS, you probably should do it with HTML + CSS instead of directly bringing in the cavalry.

Now you'd think that you usually only use JS when it's really needed, but with browsers evolving there's a lot of new HTML and CSS features that can nowadays actually replace Javascript on several occasions, as Valkhof demonstrates throughout the article:

Definitely bookmarking this one for later! Exploring the HTMLHell site a little bit more, I discovered that the article is actually part of an online advent calendar in which each day is a new resource loaded with HTML and CSS tricks and tips. I really recommend checking it out, so much good stuff over there:

Webkit Features in Safari 17.2

Among Web devs it's commonly agreed upon that Safari sucks. This is generally due to it lacking severely behind other browsers in terms of feature support, or having specific behaviour that requires you to build exceptions into your website's code that account for this.

However it seems that a bunch of new features are now supported with latest version 17.2:

Ideally we'd all agree to blacklist our websites from being used on Safari.

AI Corner

Can Machine Learning predict the price at Auctions

Recently discovered a scientific article by Jason Bailey, aka Artnome, in which he tackles a really interesting topic; as the title suggests, whether AI has the capability to predict prices at auctions:

When it comes to machine learning models, they excel at dealing with tasks that have clear input-output relationships, and in essence can be seen as massive function approximators, making them great for the purpose of predicting new data points given previous information to draw from. For instance, that's the case for LLMs that are simply gigantic statistical models into which we feed an input sequence - the prompt - for which they then generate a completing sequence of characters. This same concept can be applied to other types of data, for instance the valuation of real-estate properties, where we could train a model to predict a price, given information about the particular property.

But when it comes to the valuation of things, where even we as humans struggle, how can we train AI to do so?

In describing the art market, Plattner (1998) characterized it as having two unusual qualities. First, people spend enormous sums of money on objects that are nearly impossible to value. Second, given the limited number of potential buyers, it is difficult for artists to know if there will be any demand for the work they are creating.

Bailey proceeds to describe that there is actually tangible data that we can draw from and can be used for this purpose, more specifically we'd be attempting to make AI mimic how art appraisers tackle this difficult task by considering several contextual factors related to the artwork, as well as comparing it to other similar artworks that have already been sold in a recent timeframe. It is an incredibly insightful article and I really recommend reading, it doesn't get too technical, and gives further insights into the factors that play a role in the valuation of artworks, as well as how it can be approached as a machine learning task.

In Defense of AI Art

Claus Wilke wrote a refreshing article on AI art, that stands in favor of the new artform in contrast to many of the contemporary pieces on it. Wilke provides a breakdown of the different methods that currently exist and are used for the purpose of creating AI art and gives good arguments why it is no less involved than other artforms:

Gorilla Updates

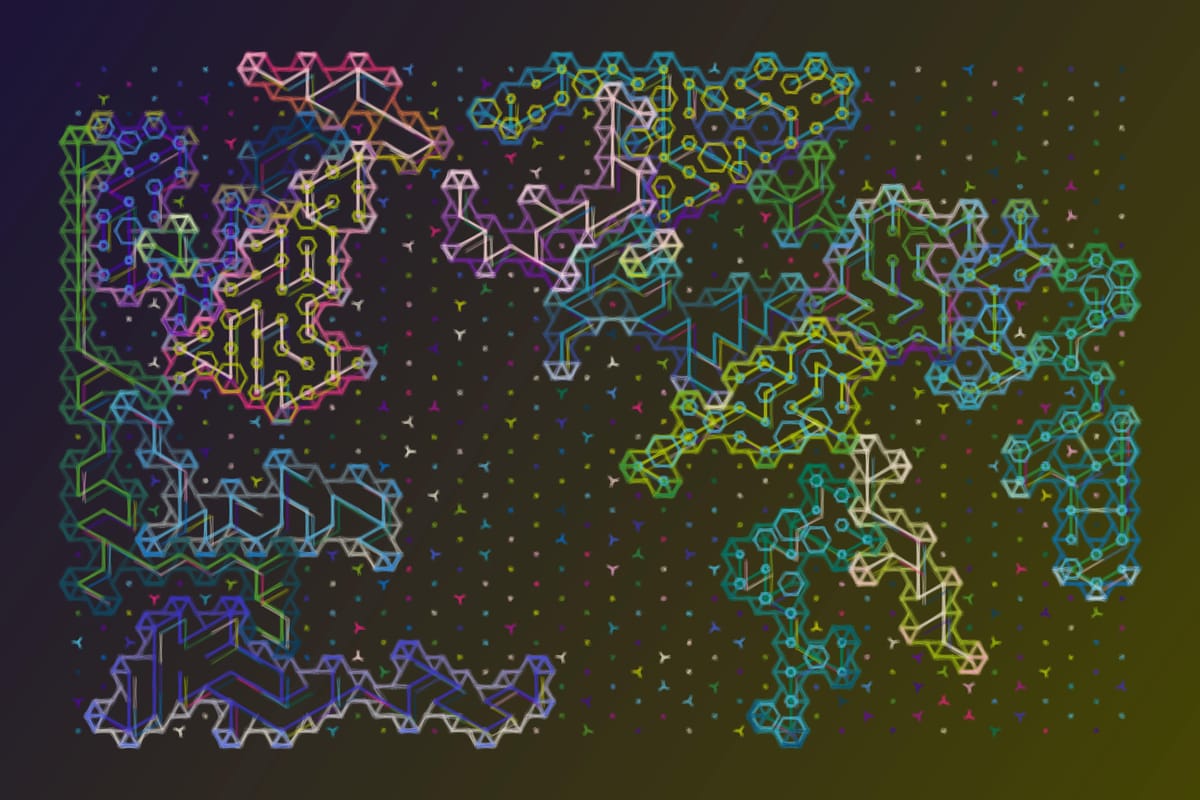

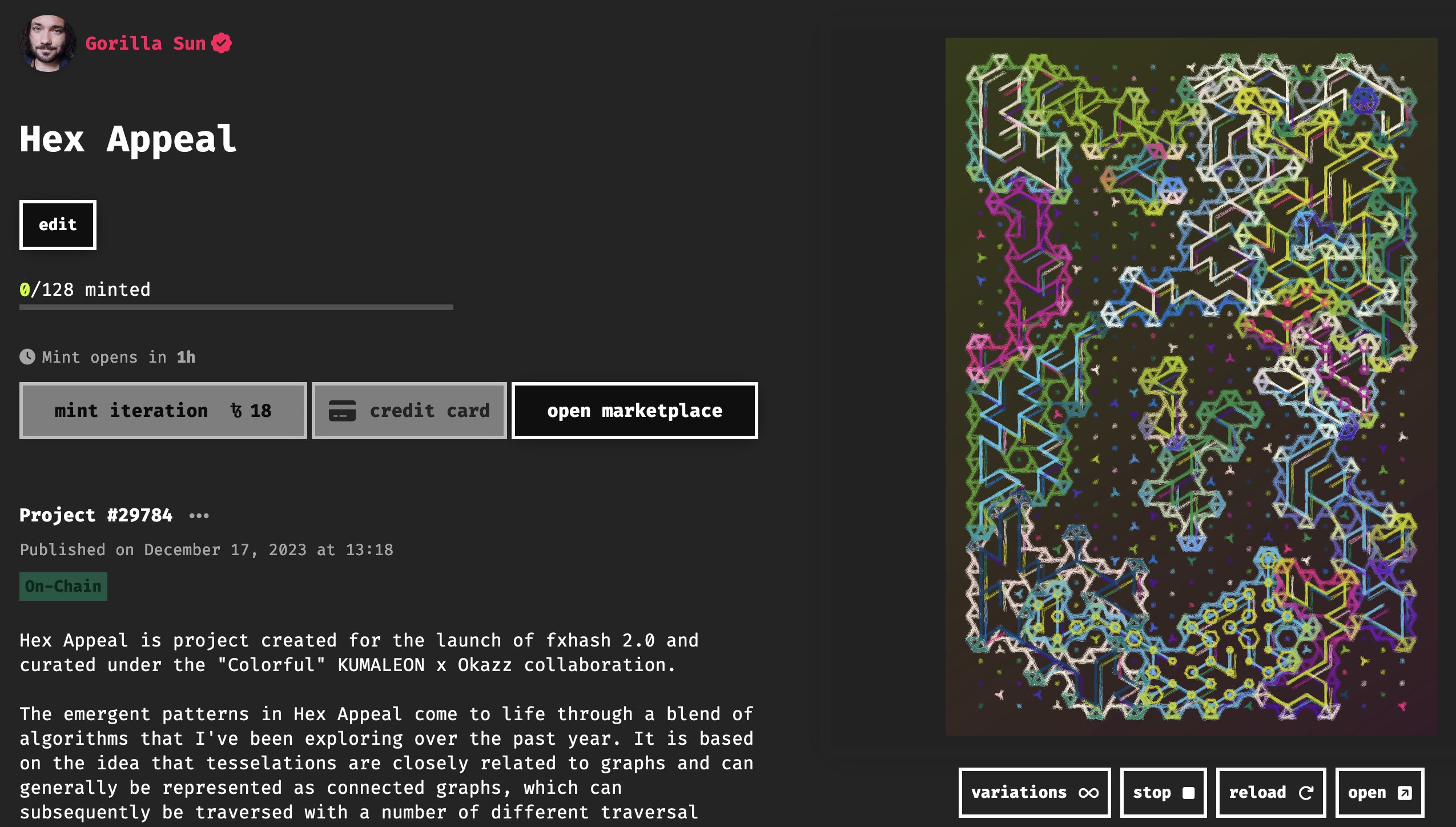

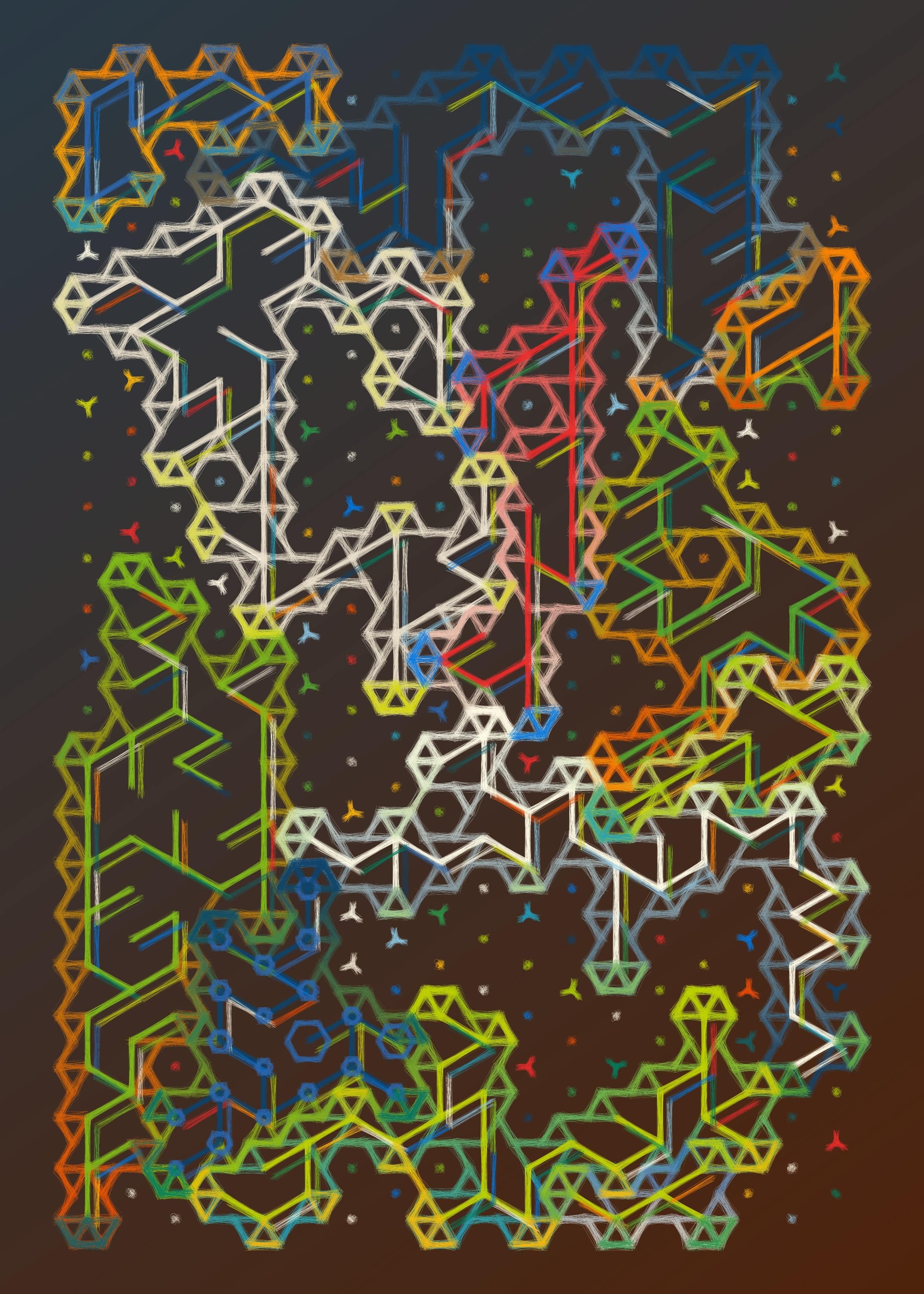

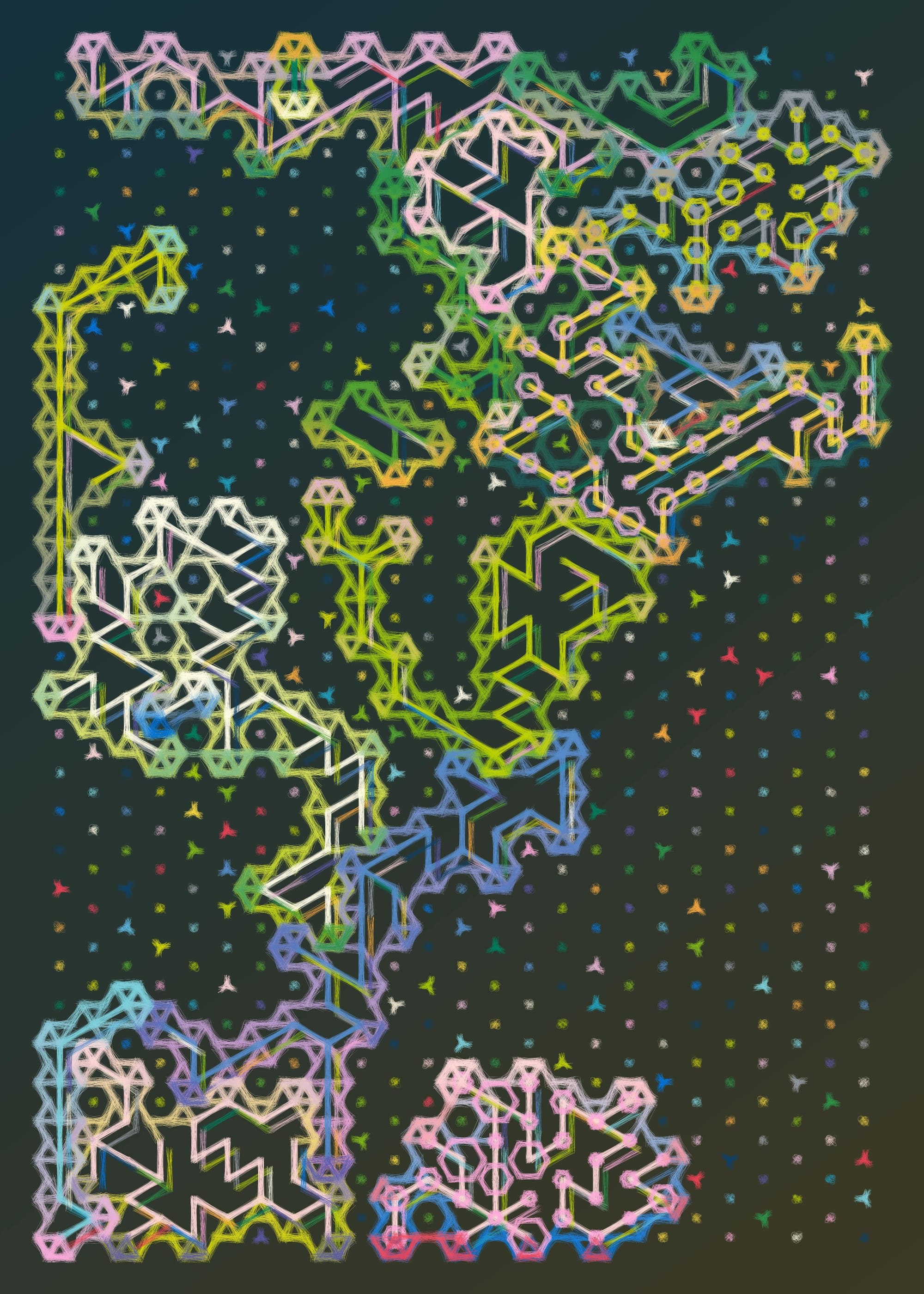

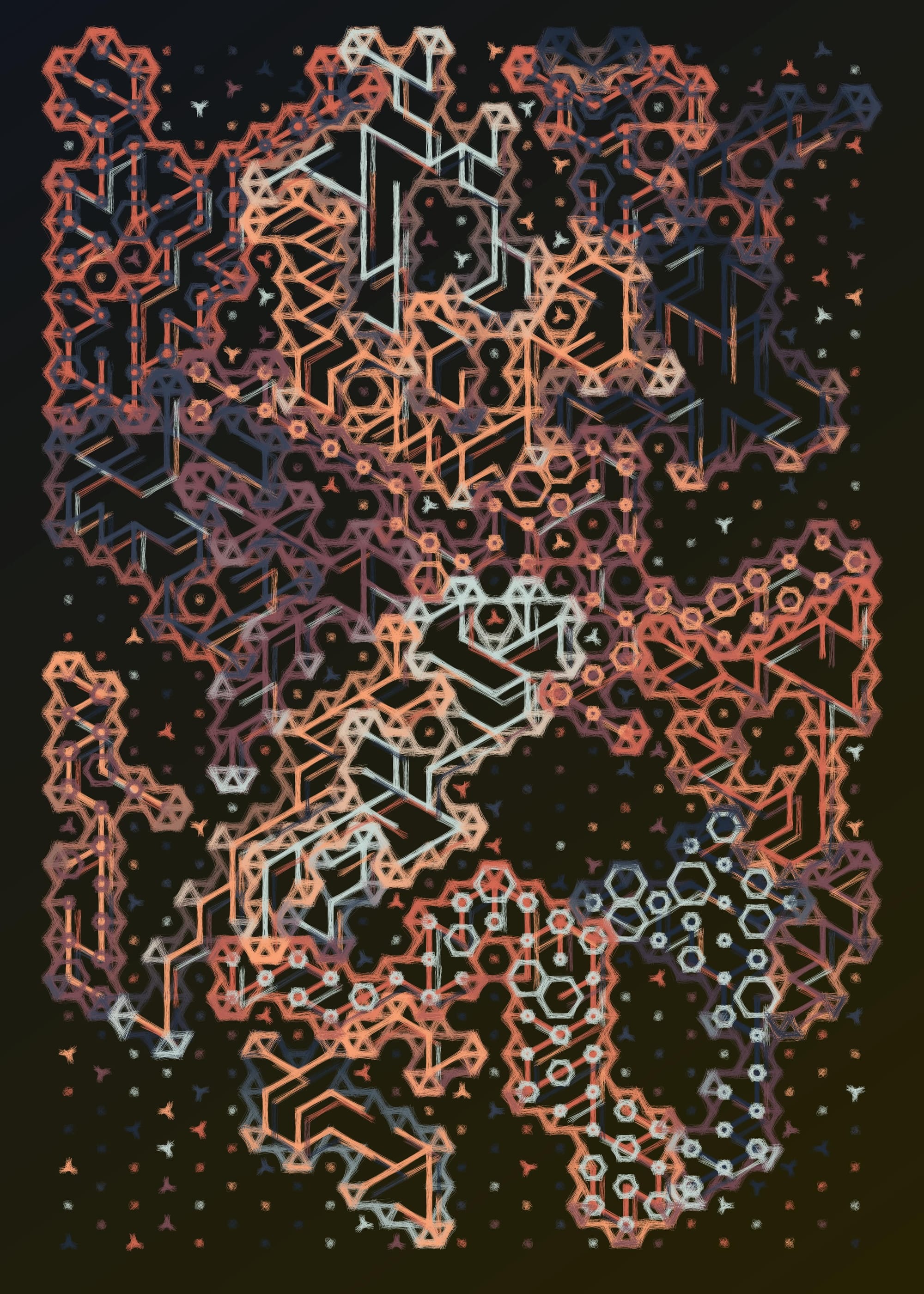

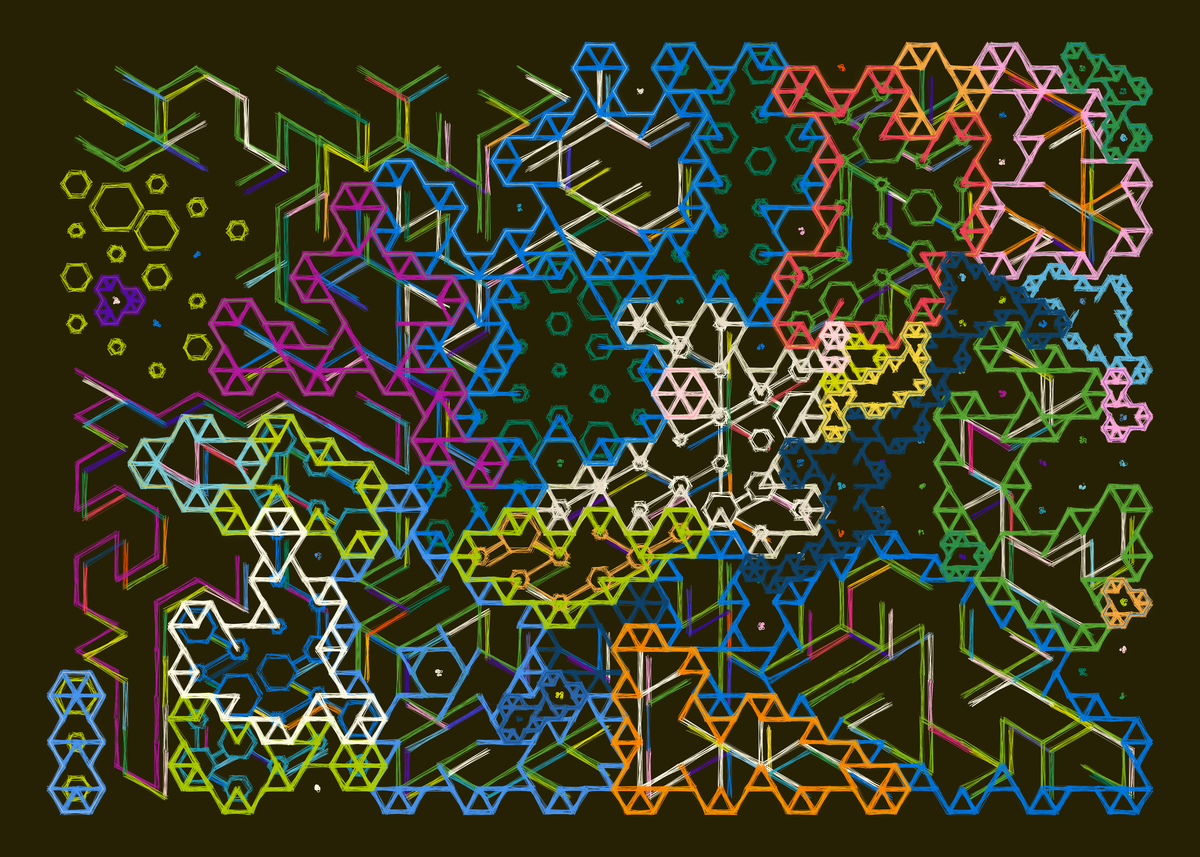

My project Hex Appeal is finally completed, and marks the last big project of 2023. I'm incredibly proud and happy how it ultimately turned out, especially considering that there have been a lot of other things going on in my life at the same time. Trying to make art in the midst of moving out of my current place has been tough, doubly so when there's also been multiple other tasks in parallel that needed to be done. The project goes live in just about half an hour from writing these words:

This time around I didn't let impostor syndrome get to me, even though it tried really hard. The code for this is a big amalgamation of different algorithms and methods, ranging from the construction and indexing of hexagonal lattices as connected graphs, all the way to graph traversal and polygon contouring algorithms. There were a couple of tough points throughout, but I powered through, reminding myself that I don't necessarily need to find a solution to NP hard graph traversal problems when I'm trying to make art. I'll probably make a "making of" style post about it over on the blog over the Christmas days. Here's a couple of sample outputs - I'm really happy with the compositions and the textures:

If you enjoy this newsletter, consider picking up one or two outputs from the project to support my work!

And that's it from me this week again, while I'm typing in these last words, I bid my farewells — hopefully this caught you up a little with the events in the world of generative art, tech and AI throughout the past week.

If you enjoyed it, consider sharing it with your friends, followers and family - more eyeballs/interactions/engagement helps tremendously - it lets the algorithm know that this is good content. If you've read this far, thanks a million!

Cheers, happy sketching, and hope you have a great start into the new week! See you in the next one - Gorilla Sun 🌸

If you're still hungry for more Generative art things, you can check out the most recent issue of the Newsletter here:

An archive of all past issues can be found here: